Relevant Bachelor degree – preferably CS, Engineering/ Information Systems.

Proficiency with Python, SQL, Java or Scala.

3 or more years of experience in data streaming tools and processes (Kafka, AirFlow, ELK, Spark etc.)

3 or more years of experience in extracting data from unstructured data sources (S3,ORC, Paquet files etc.)

Experience creating and optimizing big data processes, pipelines and architectures

Experience with big data solutions like SnowFlake, Redshift, Redis, Aerospike, Impala, Presto, Bigquery, Hive, etc.

3 or more years of experience in data modeling, BI solutions development (DWH, ETL) and SW development practices – Agile, CI/CD, TDD.

Ability to work independently and multi-task to meet deadlines

Ability to learn new technologies

Ability to work in a dynamic environment while providing solutions for complex business requirements

Not a must but a great advantage

Experience in real time analytics

Experience with Tabular cubes

Experience with no SQL data platform like Hadoop, Neo4J

Experience with building automated validation processes to ensure data integrity

Big Data Engineer

664107

11829

מרכז

Job Details

Job Details

[wishlist_button id="33076"]

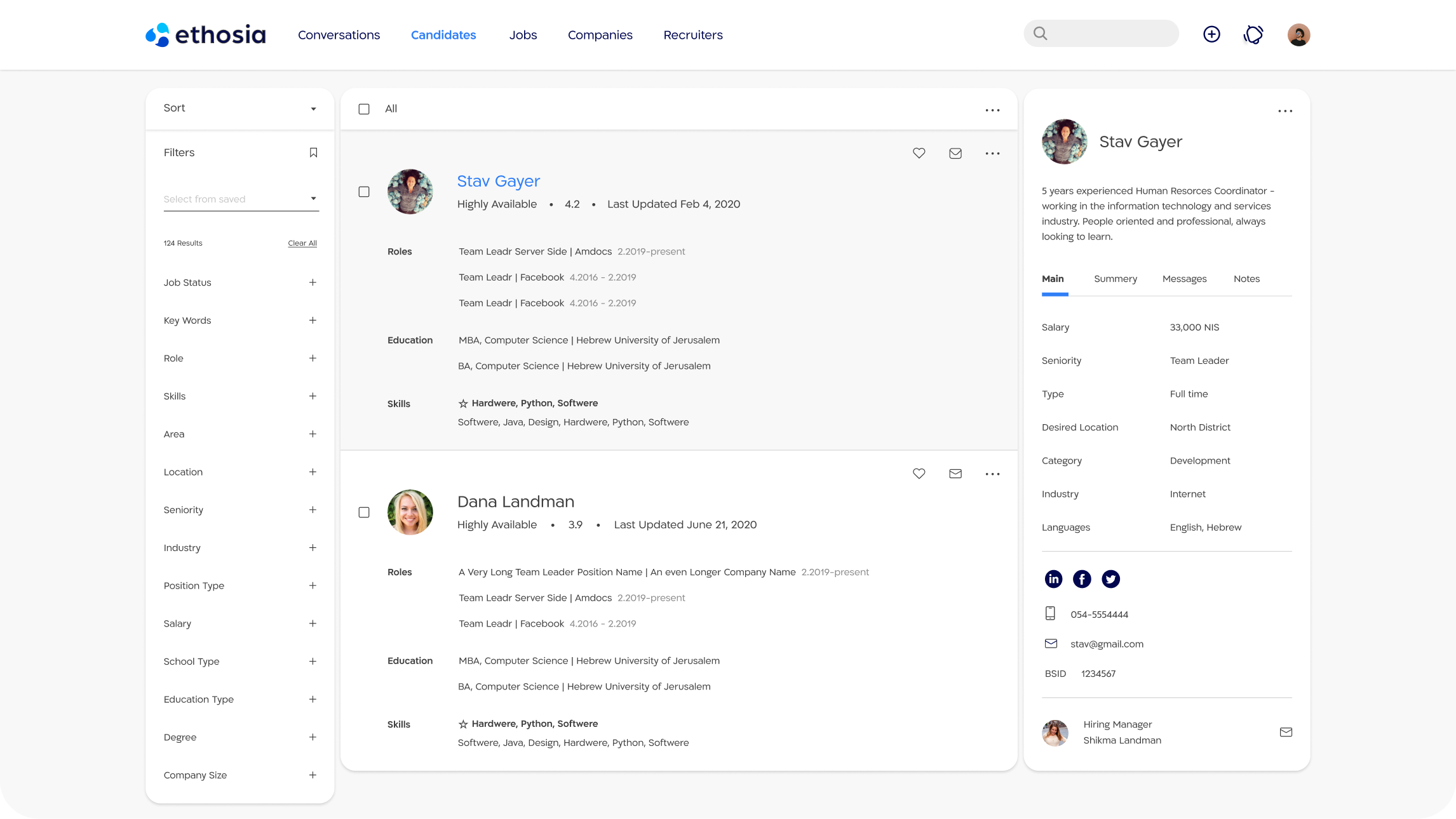

Seniority | ||

Type | משרה מלאה | |

Location | מרכז | |

Category | Java |